Project no.507618

DELOS

A Network of Excellence on Digital Libraries

Instrument: Network of Excellence

Thematic Priority: IST-2002-2.3.1.12

Technology-enhanced Learning and Access to Cultural Heritage

D5.3.1: Semantic Interoperability in Digital Library Systems

Original date of deliverable: 28th February 2005

Re-submission date: 29th June 2005

Start Date of Project: 01 January 2004

Duration: 48 Months

Organisation Name of Lead Contractor for this Deliverable: UKOLN, University of Bath

Revision [Revised Final]

Project co-funded by the European Commission within the Sixth Framework Programme (2002-2006)

Dissemination Level: [PU (Public)]

Semantic Interoperability in Digital Library Systems

Authors: Manjula Patel, Traugott Koch, Martin Doerr, Chrisa Tsinaraki

Contributors: Nektarios Gioldasis, Koraljka Golub, Doug Tudhope

Task 3: Semantic Interoperability

WP5: Knowledge Extraction and Semantic Interoperability

DELOS2 Network of Excellence in Digital Libraries

July 2004 – June 2005

Revisions following Review of March 2005

Date |

Version |

Modifications |

|

28-Feb-2005 |

Final |

|

|

15-Apr-2005 |

Review comments received |

|

|

Apr-Jun-2005 |

Revised Final |

Overview changed to Introduction and added subsections on

Changed section 1 from Introduction to Background Added section 9 on Guidelines and Recommendations Section 8 on a Research Agenda rewritten Added the 2 new JPAII activities in WP5 to the Conclusions References amalgamated, sorted into alphabetical order and moved to end of document. Additional web links added. |

Table of Contents

1.1Audience, Scope and Purpose

1.2 Relevance to DELOS2 NoE Objectives

3. Importance of Semantic Interoperability in Digital Libraries

3.2 Information Life Cycle Management

3.2.3 Importance of Semantic Interoperability

4.1.1 Universals and Particulars

4.1.5 Schema, Data Model and Conceptual Model

4.2 Constituents of Semantic Interoperability in Digital Library Environments

4.3 Standardization versus Interpretation

4.4 Levels of Semantic Interoperability in Digital Library Environments

4.4.1 Semantic Interoperability and Data Structures

5. Prerequisites to Enhancing Semantic Interoperability

5.1 Standards and Consensus Building

5.2 Role of Foundational and Core Ontologies

5.3 Knowledge Organization Systems

5.3.1 KOS are prerequisites to enhancing Semantic Interoperability

5.3.3 Number and size of KOS and NKOS

5.3.4 Methods and processes applied

5.3.6 Examples of the usage of KOS in Semantic Interoperability Applications

5.5.2 Metadata Schema Registries

5.5.4 Other Terminology Services

5.6 Role of Architecture and Infrastructure

5.6.1 Syntactic Interoperability and Encoding Systems

5.6.2 Digital Resource Identification

5.6.4 Semantic Description of Web Services

6. Methods and Processes to Enhance Semantic Interoperability

6.1 Standardization of metadata schemas, mediation and data warehousing

6.1.4 Schema integration and modular approaches

6.1.5 Mapping, matching and translation

6.1.6 Usage of Foundational and Core Ontologies

6.1.7 KOS Compatibility with Core Ontologies

6.2 Methods applied to KOS, their concepts, terms and relationships

6.2.1 Approaches as found in LIS contexts

6.2.2 Approaches as found in Ontology and Semantic Web contexts

7. Semantic Interoperability in Digital Library Services

8. Implications for a Research Agenda

9. Recommendations and Guidelines

1.Introduction

This report is a state-of-the-art overview of activities and research being undertaken in areas relating to semantic interoperability in digital library systems. It has been undertaken as part of the cluster activity of WP5: Knowledge Extraction and Semantic Interoperability (KESI). The authors and contributors draw on the research expertise and experience of a number of organisations (UKOLN, ICS-FORTH, NETLAB, TUC-MUSIC, University of Glamorgan) as well as several work-packages (WP5: Knowledge Extraction and Semantic Interoperability; WP3: Audio-Visual and Non-traditional Objects) within the DELOS2 NoE.

In addition, a workshop was held [KESI Workshop Sept. 2004] (co-located with ECDL 2004) in order to provide a forum for the discussion of issues relevant to the topic of this report. We are grateful to those who participated in the forum and for their valuable comments, which have helped to shape this report.

Definitions of interoperability, syntactic interoperability and semantic interoperability are presented noting that semantic interoperability is very much about matching concepts as a basis. The NSF Post Digital Libraries Futures Workshop: Wave of the Future [NSF Workshop] has identified semantic interoperability as being of primary importance in digital library research.

Audience, Scope and Purpose

Although undertaken as part of the activities of WP5, the intended audience of this report is the whole of the DELOS2 NoE and the Digital Library community at large. In fact, many of the issues relating to semantic interoperability in digital library systems are also relevant to other communities. It is therefore, a major aim of the report to integrate views from overlapping communities working in the area of semantic interoperability, these include: semantic web, artificial intelligence, knowledge representation, ontology, library and information science and computer science. The types of issue that the report has tried to address include:

- Why is semantic interoperability important in digital library systems and how can it be used effectively in these types of information systems?

- An analysis of different types or levels of semantic interoperability

- A clarification of the relationship between syntactic and semantic interoperability

- A description of relevant methodologies, prerequisites, standards and semantic services

- How semantic interoperability in digital library systems can be enhanced

- What are the relevant issues for the DELOS2 NoE and the Digital Library community at large?

1.2 Relevance to DELOS2 NoE Objectives

Section 2 (Network Objectives) of the Technical Annex of the FP6 DELOS2 Network of Excellence identifies a 10-year grand vision for digital libraries:

“digital libraries should enable any citizen to access all human knowledge any time and anywhere, in a friendly, multi-modal, efficient and effective way, by overcoming barriers of distance, language, and culture and by using multiple Internet-connected devices.”

We consider semantic interoperability to be an essential technology in realising the above goal. The overall objective of semantic interoperability is to support complex and advanced, context-sensitive query processing over heterogeneous information resources. The report examines several areas in which semantic interoperability is important in digital library information systems, these include: improving the precision of search, enabling advanced search, facilitating reasoning over document collections and knowledge bases, integration of heterogeneous resources, and its relevance in the information life-cycle management process. The report also investigates some theoretical issues such as clarification and selection of relevant terminology, standardisation and interpretation and the differing levels of semantic interoperability in digital library environments. It notes that information structure; language and identifiable semantics are prerequisites to semantic interoperability, as is consensus building and standardisation.

Another of the major objectives of the DELOS network is to integrate and coordinate the ongoing research activities of the major European research teams in the field of digital libraries for the purpose of developing the next generation digital library technologies. Once again, we envisage that semantic interoperability will be crucial to the next generation of digital library technologies, which in turn will be strongly influenced by semantic web technologies.

1.3 Report Structure

Following a general introduction to semantic interoperability and what we hope to achieve from it in digital library systems, we consider its importance in terms of contexts and the information life-cycle in Section 3; also looking at some relevant usage scenarios that have been developed by various projects. Section 4 is concerned with more theoretical issues including: terminology which is currently in use; the constituents of semantic interoperability; advantages and disadvantages of standardization and interpretation and three levels of semantic interoperability in digital library systems (data structures, categorical data and factual data).

We go on to consider some of the prerequisites to enabling and enhancing semantic interoperability in Section 5, these include: standards and consensus building; the role of foundational and core ontologies; knowledge organisation systems (KOS); the role of semantic services; architecture and infrastructure and access and rights issues. Section 6 investigates the methods and processes that are currently being used to improve semantic interoperability. This section falls into two subsections, the first examining standardization of metadata schemas, mediation and data warehousing, while the second covers methods which are being applied to KOS, their concepts, terms and relationships.

The emerging use of semantic interoperability in digital library services is the topic of Section 7, in which we consider how library services such as: searching, browsing and navigation; information tracking; user interfaces; and automatic indexing and classification are being enhanced and implemented to provide advanced user services. In Section 8 we have attempted to identify gaps and areas that would benefit from further research and attention. Section 9 identifies some guidelines and recommendations that would benefit the DELOS2 NoE in advancing semantic interoperability in digital library systems and thereby working towards its 10-year grand vision (see section 1.2).

2. Background

The Internet and more particularly the Web, has been instrumental in making widely accessible a vast range of digital resources. However, the current state of affairs is such that the task of pulling together relevant information involves searching for individual bits and pieces of information gleaned from a range of sources and services and manually assembling them into a whole. This task becomes increasingly intractable with the rapid rate at which resources are becoming available online.

Interoperability is therefore a major issue that affects all types of digital information systems, but has gained prominence with the widespread adoption of the Web. It provides the potential for automating many of the tasks that are currently performed manually.

Ouksel and Sheth identify four types of heterogeneity which correspond to four types of potential interoperability [Ouksel and Sheth 2004]:

- System: incompatibilities between hardware and operating systems

- Syntactic: differences in encodings and representation

- Structural: variances in data-models, data structures and schemas

- Semantic: inconsistencies in terminology and meanings

As far as digital libraries are concerned, interoperability is becoming a paramount issue as the Internet unites digital library systems of differing types, run by separate organisations which are geographically distributed all over the world. Federated digital library systems, in the form of co-operating autonomous systems are emerging in a bid to make distributed collections of heterogeneous resources appear to be a single, virtually integrated collection. The benefits to users include query processing over larger, more comprehensive sets of resources as well as the promise of easier to use interfaces that hide systems, syntax and structural differences in the underlying systems.

We define interoperability very broadly as any form of inter-system communication, or the ability of a system to make use of data from a previously unforeseen source. Interoperability in general is concerned with the capability of differing information systems to communicate. This communication may take various forms such as the transfer, exchange, transformation, mediation, migration or integration of information.

The main focus of our attention in this report is semantic interoperability in digital library systems, the goal of which is to facilitate complex and more advanced, context-sensitive query processing over heterogeneous information resources. This is an area that has been identified as being of primary importance in the area of digital library research by the recent NSF Post Digital Libraries Futures Workshop [NSF 2003].

Semantic interoperability is characterised by the capability of different information systems to communicate information consistent with the intended meaning of the encoded information (as intended by the creators or maintainers of the information system). It involves:

- the processing of the shared information so that it is consistent with the intended meaning

- the encoding of queries and presentation of information so that it conforms with the intended meaning regardless of the source of information

Furthermore, an aspect of semantic interoperability between two or more sets of data is a situation where the meaning of the entities or elements, their relationships and values can be established and where some kind of semantically controlled mapping or merging of data is carried out or enabled. The provision of this semantic information and the mapping or merging process determines the degree of semantic coherence in a given service. Consequently, there are different levels of semantic coherence or interoperability. For example, an information transfer system may carry or refer to the necessary semantic information, whereas a system that caters for the integration of information would accumulate information together using a specific mapping or merging effort.

Bergamaschi et al. identify two major problems in sharing and exchanging information in a semantically consistent way [Bergamaschi et al. 1999]:

- how to determine if sources contain semantically related information, that is, information which is related to the same or similar concept(s)

- how to handle semantic heterogeneity to support integration of information and uniform query interfaces

Some of the critical issues in this area relate to providing adequate contextual information, metadata and the development of suitable ontologies. Achieving terminology transparency has been the focus of attention of many mediated systems such as MOMIS –Mediated Environment for Multiple Information Sources [Bergamaschi et al. 1999] that provides a reconciled view of underlying data sources through a mediated vocabulary, which also acts as the terminology for formulating user queries.

Metadata vocabularies and ontologies are seen as ways of providing semantic context in determining the relevance of resources. Ontologies are usually developed in order to define the meaning of concepts and terms used in a specific domain. The choosing and sharing of vocabulary elements coherently and consistently across applications is know as ontological commitment [Guarino et al. 1994] and is a good basis for semantic interoperability in independent and disparate systems.

Although ontologies have been hailed as the answer to semantic interoperability, concerns are being raised about the sufficiency of (static) ontologies to resolve semantic conflicts, cope with evolving semantics and the dynamic reconciliation of semantics. It is a fact of life that database schemas and other types of information get out of synchronisation with the original semantics as they are used (and misused). How effectively semantic conflicts are resolved (this may need to be done dynamically) will directly affect the inferences and deductions that are performed in answering a user query –and ultimately the results that are returned. Cui et al. describe a system that addresses some of these types of issues [Cui et al. 2002]. DOME –Domain Ontology Management Environment, provides software support for the definition and validation of formal ontologies and ontology mappings to resolve semantic mismatches between terminologies according to the current context. Further, Gal describes a system, CoopWARE [Gal 1999], which investigates issues relating to services that need to adapt ontologies to continuously changing semantics in sources. The semantic model is based on TELOS [Mylopoulos J. et al. 1990] making it flexible enough to support dynamic changes in ontologies.

In this report we have tried to cover the major issues that relate to semantic interoperability in digital library systems. Section 3 begins by looking at the importance of semantic interoperability in terms of providing context; knowledge life-cycle management and some use cases. Section 4 tackles some of the more theoretical and formal issues. Prerequisites, methods and processes to enhance semantics in library information systems are dealt with in sections 5 and 6. Section 7 is concerned with the role of semantic interoperability in digital library services such as searching, browsing and navigation; information tracking; user interfaces; and automatic indexing and classification. We conclude the report by identifying gaps and trying to recommend areas that would benefit from further attention as well as highlighting ways in which the DELOS2 NoE should maximise the use of semantic interoperability in the development of the next generation of digital library systems.

3. Importance of Semantic Interoperability in Digital Libraries

To understand the importance of semantic interoperability in digital libraries, we need to look at different contexts such as the traditional context of subject indexing and access, the integration of heterogeneous information sources in the digital world of the Internet and the context of improvements to the Information Life-Cycle Management. The importance of semantic interoperability in many elements of the information life-cycle is exemplified. Semantic interoperability is also important to many different communities and disciplines beyond Digital Libraries and many of them develop related activities. A suite of use cases from several major projects illustrates the breadth of applications where the need to integrate heterogeneous sources requires efforts to improve semantic interoperability which, in addition, often imply economic benefits.

3.1 Contexts

"Semantic is the key issue in order to solve all heterogeneity problems". (Visser 2004)

Interoperability is an important issue in all information systems and services. Without syntactic interoperability, data and information cannot be handled properly with regard to its formats, encodings, properties, values, and data types etc., not merged nor exchanged. Without semantic interoperability, the meaning of the used language, terminology and metadata values cannot be negotiated or correctly understood.

Interoperability is an important economic issue as well, as Dempsey [Dempsey, ARLIS 2004] points out: it is necessary to be able to extract a maximum value from investment in metadata, content and services by ensuring that they are sharable, reusable and recombinable. The improved services will allow users to focus on the productive use of resources rather than on the messy mechanics of interaction.

Semantic interoperability has shown its importance in several different contexts, communities and disciplines.

a) It has been important for a long time in the "traditional" context of subject indexing and access, to support search with some degree of semantic precision. Knowledge Organization Systems (KOS) have been used for a long time by for example abstract and index database providers to index the content of publications, databases and similar and to support subject searching. Online database hosts like Dialog and Silverplatter have quite a long time ago, in addition, started to offer cross-search solutions for subject access to databases hosted by them. Multilingual (with the help of the development of multilingual vocabularies) and multidisciplinary/cross domain search has been addressed as well.

b) A second and more recent context is the need for integration of heterogeneous, often distributed, information sources that became increasingly possible and requested with the development of the Internet.

The Internet, Intranets and in general information networks allow the reference to, usage of and integration of highly heterogeneous information sources plus the creation of new information out of many different sources (Data warehouses, Electronic Data Interchange, Business-to-Business, Peer-to-Peer architectures, Knowledge management systems, Digital Library services, eGovernment services etc.).

The resource environment e.g. in an university setting, became greatly expanded: besides library catalogues and abstract and index databases, digitized collections, licensed collections, remote preprint archives, institutional repositories, e-reserves, virtual reference, new scholarly resources, learning objects, web-based information and publications, subject gateways etc. became available and needed to be integrated in one seamless information space for the user. Information discovery here requires to be able to navigate across many sources by subject, by name, by place, by resource type or by educational level, with as little custom work, as little pre-coordinated agreement and as little terminological investigation as possible [Dempsey, ARLIS 2004]. A. Sheth describes even more advanced scenarios of navigation by following relationships that emerge dynamically from the integration of resources, rather than by elements common to the various resources.

A solution, a semantically well integrated digital library service, could be tried to be implemented as either a more or less centralised integrated and interoperable information service or as a "recombinant" library [Dempsey 2003] based on distributed and independent services and sources (e.g. based on a Web Services architecture): highly specialised presentation, application and content services, supported by common services would be made to cooperate.

Semantic interoperability would be enabled by terminology services, earlier integral with a particular search service, now potentially externally provided as third-party services. Even a federation of distributed, multilingual, formalised and enriched KOS could be offered as one such service.

c) A third context for Semantic Interoperability activities is improvement to the so-called information life-cycle management. Among other applications, this is often used to structure work in corporate Knowledge Management (see Section 2.2 below).

Semantic interoperability is important in many different communities and disciplines far beyond the sector of Digital Libraries, which is in the focus of this report. Among those are the Government, Museum, Educational and Corporate or Business sectors, already well known with regard to ambitious efforts.

Concerning Government information systems and eGovernment initiatives a recent "eGovernment Workshop on semantic interoperability" in Norway documented good practice and European cooperation (co-organised by the EU Commission) [eGovernment 2004]. Semantic interoperability based on data definitions and identification of data and metadata, are seen as an important prerequisite to eGovernment services such as: electronic interfaces between the business community and the public sector facilitating exchange of data (e.g. for tax administration, statistics); common repositories of metadata and one-stop-shop web-based services for citizen access to data and metadata.

Semantic interoperability based on conceptual understanding of the shared information, data and knowledge interpretation, ontologies and agents, reconciliation methods and modelling of processes, is mentioned as a key element of EIF: the European Interoperability Framework [EIF 2004]. EIF is a set of standards and guidelines which describe the way in which organisations have agreed, or should agree, to interact with each other complementing national interoperability guidance by focusing on a pan-European dimension.

OntoGov is a recently started EU 6. F.P. STREP project dealing with semantics for life-cyle design of public services, tool development and the creation of a related domain ontology [OntoGov 2004].

Activities on a national level include: UK GovTalk and E-GIF which provide interoperability and metadata standards via e.g. a Government Category List, a Government Schemas Working Group and an Interoperability Working Group [GovTalk]; a portal of the Walloon Region applying a semantic web approach for interoperability. Leading terminological efforts for the support of Semantic Interoperability are carried out by Canadian [Canada] and Australian government agencies. Considerable semantic interoperability related efforts are undertaken in the GovStat project for the US Bureau of Labor Statistics [Efron et al 2004].

3.2 Information Life Cycle Management

Semantic interoperability is not only important in the "traditional" contexts of subject indexing and subject access to databases and documents, or when integrating heterogeneous information sources for the purpose of information discovery. It seems relevant in most of the stages of the so-called information life cycle.

3.2.1 Terminology

In the literature the terms information life cycle (management) and knowledge life cycle (management) are often used to represent the same concept just as the term knowledge is often used to refer to information.

One should distinguish between data, information and knowledge. According to D. Soergel [Soergel 1985, chapter 2], data is the form and information is the content, whereas knowledge has structure that ties together and integrates individual pieces of an image of the state of affairs and is the basis for action (he explains the nature of information through a cycle of: image - image of the state of affairs - new information based on observation, interpretation - updated image - action). On the other hand, M. Buckland [Buckland 1991] distinguishes between three categories of information, information-as-process, information-as-knowledge, and information-as-thing. He claims that information systems can deal with objects like documents or their representations, which are things, and not with processes or knowledge. Process in this context is the act of informing, and knowledge is what is perceived and communicated in that process.

We use here the term information life cycle management because it is data or information and not knowledge that have actually been incorporated in the so-called knowledge life cycles.

3.2.2 Models

There are many such models, each belonging to a different context and aiming at a different purpose; e.g. there are many life cycle models focusing on storage (the discipline of Computer Science), on information seeking (the discipline of Library and Information Science), information life cycles in companies and governments (the discipline of Knowledge Management) etc.

Information life cycle management models are just theoretical models, while the practical application is a more complicated process. As C. Borgman [2000, p. 108-109] says: the " ...cycle of creating, using, and seeking information can be viewed as a series of stages, but these stages often are iterative. People move back and forth between stages, and they may be actively creating, using and seeking information concurrently. People tend to manage multiple information-related tasks, each of which may be at a different stage in the cycle at any particular time."

We describe two information life cycle management models here, one from the Knowledge Management, and the other from the Library and Information Science community.

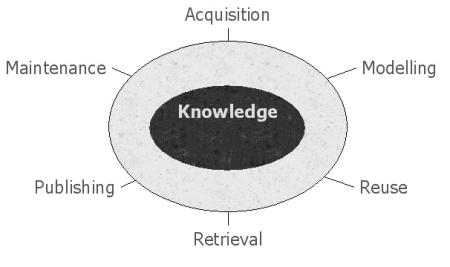

Although the one from Knowledge Management [Shadbolt et al.

2003] is called knowledge life cycle management, it is actually

dealing with information as we define it (see above). This model

is from a report by Advanced Knowledge Technologies

Interdisciplinary Research Collaboration (AKT), focusing on tools

and services for managing knowledge throughout its life cycle.

Their model comprises six challenges, and serves as a means to

classify AKT services and technologies.

They are:

- acquisition,

- modelling,

- reuse,

- retrieval,

- publishing and

- maintenance.

For the different stages of the life cycle, specific "knowledge technologies" are expected to be fruitful. When discussing acquisition, their focus is on harvesting of ontologies from unstructured and semi-structured sources. In modelling they deal with modelling life cycles, and the coordination between Web services, as well as with mapping and merging of ontologies. Reuse refers to reuse of Web services via brokering systems and their experiments in mediating between problem solvers via partially shared ontologies. In the retrieval stage they focus on the transition from informal to formal media. In the publishing stage they demonstrate how formally expressed knowledge may be made more personal. The maintenance stage refers in their case to tools that respond to changes of language use in an organization over time.

Figure 1: AKT's six knowledge challenges

The other is a library and information science approach, as described in G. Hodge [Hodge 2000]. The focus of her paper is on digital archiving based on an information life cycle approach. She identifies best practices for archiving at all stages of the information management life cycle:

- creation,

- acquisition,

- cataloguing and identification,

- storage,

- preservation and

- access.

Creation is the act of producing the information product. Acquisition is related to collection development, and the two represent the stage in which the created object becomes part of the archive or the collection. Identification provides a unique key for finding the object and linking that object to other related objects. Cataloguing is important for organization and access. Storage is a passive stage in the life cycle, although G. Hodge reminds us that storage media and formats have changed over time, which caused some information to be lost maybe forever. Preservation refers to preserving the content as well as the look and feel of the object. Access needs to be ensured and enabling it comes as a result of the previous stages.

Those two models overlap to a certain degree. Thus acquisition is an element of both life cycles. Preservation is a narrower term to maintenance, and access is a broader term to retrieval. In addition, taken together they might not be complete even for one given context.

3.2.3 Importance of Semantic Interoperability

Semantic interoperability issues seem relevant in each of the elements from the following extended list of information life cycle elements:

- Creation, modification

- Publication

- Acquisition, selection, storage, system and collection building

- Cataloguing (metadata, identification/naming, registration), indexing, knowledge organisation, knowledge representation, modelling

- Integration, brokering, linking, syntactic and semantic interoperability engineering

- Mediation (user interfaces, personalisation, reference, recommendation, transfer etc.)

- Access, search and discovery

- Use, shared application/collaboration, scholarly communication, annotation, evaluation, reuse, work environments

- Maintenance

- Archiving and preservation

In this information life cycle creators/authors, publishers, information systems managers, service providers and end-users are involved.

In the elements 5, 4 and 7, semantic interoperability activities seem most important, whereas these issues in the elements 2, 3, 9 and 10 are clearly less relevant.

1. When creating or modifying information, terminological resources can assist the creator in higher quality and better clarity of expression and, if done at this stage, assist in metadata generation for documents and objects. Semantic interoperability measures could support a more controlled terminological development in and between disciplines and communities. Creators/authors contribute to terminology development as well and thus help in developing and keeping up-to-date the vocabularies they might use as authoring or searching support.

2. Publication might involve tasks mentioned under 1 Creation and under 4 Cataloguing

etc. Publishers might provide activities mentioned under 1, 3-6, 9, and 10,

similarly to other actors like libraries and other memory institutions and intermediaries.

The phase of publication in the life cycle alone has no major

benefit from semantic interoperability operations, other than

including semantic and subject information, in the best of cases

from controlled vocabularies, into the published documents.

3. Acquisition and collection building might use subject vocabularies and other semantic information when deciding about the inclusion of a document/object into a service or specific collection. Even automated procedures like harvesting need them to build subject specific collections (e.g. topical crawling). Interoperability activities allow including documents with equivalent but not identical terminology.

6. Information mediation activities can provide higher quality when based on semantically interoperable vocabularies, either operating in the background or offered to the user for navigation and support. Adaptive user interfaces can work with the users and translate to their own specific vocabulary and involve semantically corresponding information based on e.g. mapped vocabularies. They can provide different views of their resources and, thus, assist in personalisation as well as in reference and information transfer.

8. Terminological resources and semantic interoperability measures will improve use and evaluation of information. A greater benefit occurs in the scholarly communication and collaboration process with all related activities, again, clarifying the meaning of terms and concepts and their relationships.

9. Maintenance of information has to care for the links to related semantic information and vocabularies and to make sure that they are available as well. Version control and history/scope notes or similar are needed to preserve the terminology and meaning of documents to keep them understandable and to be able to follow changes over time.

10. Preservation of information includes taking care of the semantic information; the vocabularies, methods and tools, and not only the "raw" information of the documents (cf. 9). It may have extraordinary high requirements for interoperability with technologies expected to be available for a long time.

For the life cycle elements 5, 4, 7, semantic interoperability activities are of outmost importance. 5: Information integration, brokering etc. can basically not be carried out properly without such efforts. This whole report, including most of the use cases in Section 2.3, cover these three elements and provide examples from their realm.

Since vocabularies, semantic relationships and mappings are information (objects) as well, their life cycle: creation, acquisition, collection, modelling, identification, integration, mediation, search, use, maintenance and preservation etc. is of primary importance and a necessary prerequisite to improved semantic interoperability in all information life cycle contexts.

3.3 Use Cases

Every service trying to assemble data for cross search or to integrate data from (semantically) heterogeneous sources needs to address problems of semantic interoperability. This seems today to be the most frequent case in the digital information environment.

Here are a few short example use cases with some references to use cases developed in related projects and initiatives.

a) A web site gathers pages and documents from different

research groups and sources. A controlled list of terms applying

at least synonym control is needed to allow good search results

(sufficient recall). The aim may be to enable cross-searching

of databases, aggregation of the data contained within them, or

the construction of software tools to present the information to

the end-user, for example in a portal or Virtual Learning

Environment.

Ex.: any kind of portal, OAI repository (e.g. arc), institutional

repository system (DSpace, eprint etc.), even personal digital

libraries.

Ex.: Use case Food Safety: Antimicrobials online A/OL [from

SEMKOS]

A/OL is a web-based system that facilitates the dissemination of

knowledge on preservation of food, extracted from scientific

papers. Microbiology experts have assessed a large number of

publications that contain data and results of experiments on

microbial compounds. They extract the information that is usable

in industrial applications, in particular on the effectiveness of

the compounds in real food systems. The project is funded by the

EU, the Dutch Ministry of Agriculture and DSM (multinational

company producing food ingredients). In order to support the

necessary quality of search in this new online database an

ontology has been developed which needs extension and

maintenance.

b) In order to provide for some precision in searching the

same site, other semantic relationships between the key terms

need to be specified, e.g. in a hierarchical thesaurus or a

formal ontology. Dictionary, glossary or encyclopaedia

information might be needed to clarify the exact meaning of the

terms. A more advanced feature of search term expansion might be

required.

[cf. SWAD-E use cases: Extend JISC-funded Subject Portal Project

cross-search]

Both a) and b) may involve the creation and maintenance of

general or domain specific vocabularies or the identification of

suitable vocabularies, possibly to be merged and the

markup/annotation/indexing of documents or metadata records with

the new terms. [cf. SWAD-E use cases; OWL use case Web portals,

Multimedia collections]

Authority Control Service [cf. SIMILE use case. OCLC service to

provide subject classification and authority control to EPrints

UK and DSpace: OCLC Metadata Switch Project].

Registries of (RDF) schemas/vocabularies (cf. SIMILE use

cases).

Data mining and automated annotation on unstructured

information, like journal abstracts [cf. SIMILE use cases].

SEMKOS Taxonomy use case, James Brooks [SEMKOS]:

"So, imagine a future scenario, perhaps for an abstracting &

indexing service, or for annotation of PDFs, or for on-the-fly

search engines using semantic expansion of a query:

My document is automatically indexed, the scientific names in it are, firstly,

identified/recognized and, then, checked against recognized authority files.

Spelling mistakes are recognized and simply corrected. After an invalid scientific

name the correct name is added, say, in brackets OR added to an index OR mapped

on the fly to the searched-for term, etc. If not as simple as this the document/resource

is flagged to the attention of an editor. There could be automated addition

of valid names to a master authority file OR one could automatically validate

the status of names appearing in a thesaurus, so they are current. One could

imagine software agents interrogating appropriate resources, mediating from

place of description/validation/revision/detail addition to validated checklists/catalogues

to index authority files, etc.

Now, there is often no single place that can be referred to, to

validate a particular name for a particular (species of)

organism. There are competing hierarchical schemes. There is

often incomplete integration between catalogues for the same taxa

in different geographical regions. A problem for indexing

resources, and the subsequent retrieval of them by other users,

is that of what is the correct name to use. Thus, an organism may

be known, in any one language, by more than one common name.

Scientific nomenclature is not stable; historically, more than

one name has often been applied to the same organism, in which

case rules of precedence apply; alternatively, there are changing

ideas as to what constitutes the limits of a particular genus,

and new combinations may result when genera are split or when a

species placed in one genus is shown to have greater affinities

with another. Not to speak of common names. Integration of

taxonomy and object databases (e.g. in Natural History Museums)

is necessary."

c) Mapping and/or translation of terms is required if the improved search is to be applied to documents in different languages (bi- and multi-lingual collections). [cf. SWAD-E use cases: Multilingual image retrieval]

d) In order to allow browsing of structured collections and

sub-collections of documents a (hierarchical) structure of

categories (classification system) needs to be applied or built.

This would allow exploration or search of all appearances of e.g.

dogs on the site without having to specify each and every

individual member of the family using all potential variants of

names.

Ex.: Collections of (metadata on) journal articles [OWL use case

Design documentation]

e) In the case of collections containing documents from or relating to different historical periods and/or with different political and cultural views, e.g. about Poland in the 18th Century, semantic interoperability measures identifying the different contexts and providing the appropriate vocabulary with relationship information (Poland’s extension, boundaries, legal status at different times, seen by different players) are required (example from Kim Veltman). A similar task is the mapping of different views from different user groups and their specific vocabularies [OWL use case Corporate web site management].

f) If well structured documents with different origins are

gathered, semantic interoperability operations need to be applied

in two steps: approaches described above need to be preceded by

deciding the equivalence of the semantic definition of data

buckets/ metadata elements/ bibliog. fields/ properties/

attributes/ tags or similar with subsequent mapping, joining or

splitting decisions.

Ex.: the standardisation of different metadata profiles into one

common application profile as done in project Renardus to allow

common cross- searching.

Ex.: Government metadata interoperability initiatives (cf.

Section 2.1)

[from the SEMKOS Ontologies use case]:

Databases may have their meaning locked in textual documentation

or in the structural format of the database, for example in the

tables and keys of a relational database. Consistent access to

Knowledge Organisation Systems requires advice, tools and

standards that enable KOS owners to

- extract the semantics and the data model of data sets

- make this information available in a consistent, standards-based format

- provide mechanisms to access this information via the network

g) In case the documents or their metadata use values drawn

from different KOS -or, as DCMI Grammar principles say- from

different vocabulary encoding schemes (e.g. Library of Congress

Subject Headings, North American Industry Classification System

NAICS), these can be formally mapped (or linked via crosswalks)

to improve Semantic Interoperability.

Ex.: Classification mapping in project Renardus to allow

cross-browsing based on one common (switching)

classification.

[cf. SWAD-E use cases: Extend JISC-funded Subject Portal Project

cross-search]

[cf. SWAD-E use cases: Bized/SOSIG trials of data integration

via classification mapping in the DESIRE project]

Ex.: Government interoperability work: Canada, e-GIF UK, EU (see

Section 2.1)

Ex.: Geo-spatial integration via mapping of geographic names,

places, regions, feature types etc.: Alexandria Digital

Library.

The occurrence of different syntax encoding schemes (e.g. date string formatted in accordance with a certain formal notation, according to DCMI) requires rather a measure of assuring syntactic interoperability, a conversion into one common format.

These use cases could be written from the perspective of different actors or corresponding use cases could be added for:

- information creators (authors and metadata creators of documents, businesses, government agencies)

- information/service providers, intermediaries; machine-to-machine (vocabulary builders, web portal builders, collection organisers, indexers - assuming manual indexing, publishers of documents and databases, government agencies)

- end users trying to discover relevant information (information searchers, citizens, decision makers (decision support), educational needs, industrial contexts, electronic commerce)

All the mentioned measures imply to deal with and improve semantic interoperability. Approaches to be used are creating, extending, revising, maintaining, identifying, sharing, representing, using, syndicating, translating, mapping or merging vocabularies and applying them to the information system in order to allow human users (or machines) to improve the quality of their information discovery and search.

The economic value of improved Semantic Interoperability is described in the following use case:

Pest species in biological control [from: SEMKOS use case,

Application B.3 page 18]

"Natural History Museums can be considered as archives of

biodiversity. They harbour millions of specimens, providing

first-hand information about geographical and historical presence

of organisms, their characteristics, ecological environments,

host-parasite relationships etc. The classification of these

specimens/organisms is based on a taxonomic KOS, in which

scientific names are internationally standardized, but which are

fragmented and exist in many versions.

An interesting example taken from Systematics Agenda 2000

elucidates the economic importance of a reliable taxonomic

identification of a pest species in biological control: In 1974

an introduced mealybug species was discovered in Zaire. This pest

was costing cassava growers in West Africa nearly 1,4 billion

dollars in damage each year. The species was described as

Phenacoccus manihoti and a search was made for biological control

agents in northern South America. When no effective parasites was

discovered, a mealybug specialist was asked to re-examine the

species and found that a closely related species, P. herreni, was

found primarily in northern South America and that P. manihoti

actually occurred further south. With this information, effective

parasites were located and introduced into the infested areas of

Africa.

The current reality of systems, that return on a question about

spiders mostly Spiderman literature, is obviously

inadequate."

4. Theoretical Considerations

As outlined in the previous chapters, the achievement of semantic interoperability is a complex task, which affects multiple levels and functions of information systems and the information process. In this section, we propose a systematic requirements analysis of the different constituent functions necessary to achieve overall semantic interoperability in digital library environment. We begin with a clarification of terminology that tends to be inconsistently used between the computer science and the libraries’ community.

4.1 Terminology

We propose here one possible definition for each term for the purpose of this document. An exhaustive survey e.g. of definitions of ontology may be interesting but not very useful in order to make the intended meaning of this report more clear to the reader.

4.1.1 Universals and Particulars

From a knowledge representation perspective, concepts can be divided into universals and particulars. The fundamental ontological distinction between universals and particulars can be informally understood by considering their relationship with instantiation: particulars are entities that have no instances in any possible world; universals are entities that do have instances. Classes and properties (corresponding to predicates in a logical language) are usually considered to be universals. [after Gangemi et al. 2002, pp. 166-181]. E.g., Person or A being married to B are universals. John, Mary and John is married to Mary are particulars. General nouns and verbs of a natural language can be regarded to describe universals (polysemy not withstanding), whereas names describe particulars, [Steven Pinker 1994] describes the distinction of general nouns, verbs and proper names as innate functions of the human brain.

4.1.2Ontology and Vocabulary

We follow here the definition of [Guarino 1998]:

An ontology is a logical theory accounting for the intended meaning of a formal vocabulary, i.e. its ontological commitment to a particular conceptualization of the world. The intended models of a logical language using such a vocabulary are constrained by its ontological commitment. An ontology indirectly reflects this commitment (and the underlying conceptualization) by approximating these intended models.

Guarino further defines a model as a description of a particular state of affairs, a world structure, whereas the conceptualisation describes the possible states of affairs or possible worlds of a domain consisting of individual items. Further, particular states of affairs are seen as instances (extension) of the conceptualisation. The ontology only approximates the conceptualisation, because its logical rules may not be enough to define all constraints we observe or regard as valid in the real world. In this sense, the formal vocabulary is a part of the ontology, but not an ontology in itself, which is a logical theory. The symbols of this vocabulary would normally refer to universals, as do the nouns and verbs of natural languages. Following this definition, a gazetteer is not an ontology, because it describes a particular world structure. A simple thesaurus which uses the broader term generic relationship [ISO2788] in the sense of IsA between concepts (universals) can however be regarded as a very simple form of an ontology. A controlled vocabulary clearly does not qualify as an ontology, but could be used to create an ontology [Qin, Jian & Paling, Stephen, 2001]. As the extent of formalization is not defined, there are varying opinions from which point on a terminological system qualifies as ontology.

4.1.3 Language and Vocabulary

A vocabulary is a set of symbols. A language consists of a vocabulary and a grammar that defines the allowed constructs of this language. A vocabulary alone does not qualify as a language.

According to http://www.wordiq.com: In mathematics, logic and computer science, a formal language is a set of finite-length words (i.e. character strings) drawn from some finite alphabet.

In computer science a formal grammar is an abstract structure that describes a formal language precisely: i.e., a set of rules that mathematically delineates a (usually infinite) set of finite-length strings over a (usually finite) alphabet. Formal grammars are so named by analogy to grammar in human languages.

Formal grammars fall into two main categories: generative and analytic.

- A generative grammar, the most well known kind, is a set of rules by which all possible strings in the language to be described can be generated by successively rewriting strings starting from a designated start symbol. A generative grammar in effect formalizes an algorithm that generates strings in the language.

- An analytic grammar, in contrast, is a set of rules that assume an arbitrary string to be given as input, and which successively reduce or analyze that input string to yield a final Boolean, "yes/no" result indicating whether or not the input string is a member of the language described by the grammar. An analytic grammar in effect formally describes a parser for a language.

In short, an analytic grammar describes how to read a language, whereas a generative grammar describes how to write it.

Note,that the term alphabet in the above is synonymous to the term vocabulary, and not to what normal people regard as an alphabet, and the term word in the above is synonymous to the term phrase, and not to what normal people regard as a word. This is enough reason for confusion. Therefore, the above must be read for normal people: A formal language is a set of possible, finite-length phrases, and not a vocabulary.

The linguist Noam Chomsky offers this definition of human language: First, he says that human language has structural principles such as grammar or a system of rules and principles that specify the properties of its expression. Second, human language has various physical mechanisms of which little is known but it does seem clear that "laterization plays a crucial role and that there are special language centres, perhaps linked to the auditory and vocal systems". Thethird quality of human language is its manner of use. Human language is used for expression of thought, for establishing social relationships, for communication of information and for clarifying ideas. Another characteristic of human language is that it has phylogenetic development in the sense that language evolved after humans had separated from the other primates. Therefore language must have had a selective advantage and must coincide with the proliferation of the human species. Finally, human language has been integrated into a system of a cognitive structure [Chomsky 1980, cited after Britta Osthaus].

Normally, a language also commits to the intended meaning of its symbols and constructs. In contrast to the ontology, it aims at enabling descriptions of states of affairs without intention to approximate the possible worlds. So, phrases like “my dog is a cat” or “the ship rains under the mountains”, are perfect English but violate our conceptualisation.

A suitable logical language, such as OWL, TELOS, KIF, RDF/S etc., allows for describing models of a particular state of affairs as instances of concepts defined in a formal ontology. Then, this language together with the vocabulary of the ontology can be seen as a specific language to describe valid models of this ontology.

4.1.4 KOS and Vocabulary

The term Knowledge Organization Systems (KOS) refers to controlled vocabularies as well as to systems/tools/services developed to organise knowledge (tools that present the organized interpretation of knowledge structures [Zeng 2004]). For the purpose of this report, we distinguish the contents, i.e. the vocabulary and the associated logical relationships, KOS in the narrower sense, from the software that may present the content. Some may require for a KOS to implement some logical structure, we will use it however in the context of this report for all kinds of knowledge organized as reference for use in information systems: from simple term lists up to taxonomies, [see definition in NKOS 2000; HILT 2001, App. F: Glossary]; Classification systems and elaborated ontologies on the side of the universals; Authority files and Gazetteers on the side of particulars.

In this sense, uncontrolled vocabularies, i.e. term lists

without an organized editorial control, and controlled

vocabularies, i.e. term lists with an organized editorial control

are regarded special cases of KOS, and all kinds of KOS that deal

with universals must contain a vocabulary in the narrower sense.

We propose to distinguish proper names, such as place names or

names of persons from terms due to their different role and

nature. Even though the term “vocabulary” is

frequently used as a synonym for KOS as we define it here, we

propose not to use it in this sense because of the many

ambiguities its use introduces with respect to other senses. In

particular it may lead to a confusion of part and whole.

From an environmental perspective we define an “NKOS” as: Networked KOS, interactive information devices published in digital format. These are primarily aimed at supporting the description and retrieval of heterogeneous information resources on the Internet [Zeng 2004].

4.1.5 Schema, Data Model and Conceptual Model

The term schema typically stresses the structural aspect and even storage format. With more modern DBMS, the actual physical format is more and more hidden and irrelevant to the designer, e.g. the on-line dictionary SearchDatabase.com (http://searchdatabase.techtarget.com) writes:

“In computer programming, a schema (pronounced SKEE-mah) is the organization or structure for a database. The activity of data modelling leads to a schema. (The plural form is schemata. The term is from a Greek word for "form" or "figure.") The term is used in discussing both relational databases and object-oriented databases. The term sometimes seems to refer to a visualization of a structure and sometimes to a formal text-oriented description.”

Typically, the term schema is used to relate to the data structure as implemented, and not so much to refer to its intended meaning, in particular the meaning it has for real world described by instances of the schema. We prefer as a more general term data structure, defined in the same source as:

“A data structure is a specialized format for organizing and storing data. General data structure types include the array, the file, the record, the table, the tree, and so on. Any data structure is designed to organize data to suit a specific purpose so that it can be accessed and worked with in appropriate ways. In computer programming, a data structure may be selected or designed to store data for the purpose of working on it with various algorithms.”

From the point of view of standardization and semantic interoperability, this term makes a relevant abstraction from the internal organization of documents, metadata and databases.

Whereas computer science traditionally uses the term data model for the schema definition constructs, such as entity-relationship (E-R), XML DTD, others use it as product of the activity of data modelling, i.e. synonymous to schema. We propose to avoid the term. Use instead schema or conceptual model as appropriate.

On the other side, a conceptual schema is typically referred to as a map of concepts and their relationships. The difference between a conceptual schema and an implemented schema is typically in the omitting of data elements for the control of the information elements in the database such as keys, oid, locking flags, timestamps, etc., as well as in the explicit reference to real world concepts referred to by the schema constructs. The term conceptual model comes even closer to a logical formulation of the possible states of an application domain, so that normally a conceptual model can be regarded as a kind of ontology.

In many cases a data structure, abstract from its use to specify a storage layout, can also be seen as a special case of formal language to make statements about particular states of affairs. Its elements (fields, tables etc.) constitute a formal vocabulary, such as the Dublin Core Element Set. Similarly, an ontology can be used to define such a formal language, and hence data structures (such as the RDFS version of the CIDOC CRM.

However, this argument should not be used to regard the field names of a metadata structure as a kind of ontology. Most data structures do not qualify as ontologies, as data structure element definitions lack any formal approach to approximate a conceptualisation, e.g. the field publisher in DCES can be interpreted in at least three ways. Whereas concepts of an ontology are meaningful out of the context of a data structure, field names typically make sense only in the specific element hierarchy or connection. We regard the natural language interpretation of the gibberish of field names out of context as generally misleading, e.g. a field age in the CIDOC Relational Model has to be interpreted as stage of maturity of the referred art object; a field destination in the MIDAS schema at English Heritage is interpreted as destination of a wrecked ship on its last mission.

Metadata structures, often called metadata vocabularies or metadata frameworks should be regarded in the first place as schemas or conceptual schemas. Only in some cases may they be regarded as direct derivatives of an ontology.

4.1.6 Mapping and Crosswalks

The last term to be described here is the concept of schema mapping.

Semantic World defines mapping as: “the process of associating elements of one set with elements of another set, or the set of associations that come out of such a process. Often refers to the formally described relationship between two schemas, or between a schema and a central model.” (http://www.semanticworld.org).

In the metadata community, the term crosswalk became fashionable:

A crosswalk is a semantic and/or technical mapping (sometimes both) of one metadata framework to another metadata framework.

Semantic mapping example:

- Dublin Core element title corresponds to the ADN element of title

- Dublin Core element type corresponds to the ADN element of learning resource type

Technical mapping example

- Technical mapping uses various programmatic solutions to transform metadata records or computer files. DLESE uses eXtensible Stylesheet Language Transform (XSLT) to programmatically change eXtenisble Markup Language (XML) metadata records to other formats. For example, the following shows Dublin Core XML elements and their corresponding ADN XML elements, http://www.dlese.org/Metadata/crosswalks/

Obviously both refer to the same process. What is called above as semantic mapping is lately in computer science also referred to as schema matching, whereas mapping implies the actual transformation algorithm. We prefer this definition:

“A schema mapping is the definition of a transformation of each instance of a data structure A into an instance of a data structure B that preserves the intended meaning of the original information and that can be implemented by an automated algorithm. The application domain expert ultimately judges the preservation of the intended meaning. A partial mapping may lose a clearly defined part of the original information. The actual schema map, i.e. the product of a mapping process, may also be called a functor for the data translation process.”

We prefer the term schema matching to semantic mapping, because any mapping should be semantically correct.

4.2 Constituents of Semantic Interoperability in Digital Library Environments

In order to make the following distinction more obvious, let us regard an artificial, but realistic demonstration case about the integration of information objects related to the Yalta Conference in February 1945. This was the event officially designating the end of WWII. One can hardly find a better-documented event in history. We have created the demonstration metadata below from the information we found associated with the objects. The titles are as we have found them. The scenario is about how to make these information objects accessible by one simple request.

a) The State Department of the United States holds a copy of the Yalta Agreement. One paragraph begins, The following declaration has been approved: The Premier of the Union of Soviet Socialist Republics, the Prime Minister of the United Kingdom and the President of the United States of America have consulted with each other in the common interests of the people of their countries and those of liberated Europe. They jointly declare their mutual agreement to concert [http://www.fordham.edu/halsall/mod/1945YALTA.html].

A Dublin Core record may be:

Type: Text

Title: Protocol of Proceedings of Crimea Conference

Title: Declaration of Liberated Europe

Date: February 11, 1945.

Creator: The Premier of the Union of Soviet Socialist Republics

Creator: The Prime Minister of the United Kingdom

Creator: The President of the United States of America

Publisher: State Department

Subject: Postwar division of Europe and Japan

Identifier: ...

b) The Bettmann Archive in New York holds a world-famous photo of this event(Fig. 1). A Dublin Core record for this photo might be:

Type: Still Image

Title: Allied Leaders at Yalta

Date: 1945

Publisher: United Press International (UPI)

Source: The Bettmann Archive

RightsHolder: Corbis

Subject: Churchill; Roosevelt; Stalin

Figure 1: Allied Leaders at Yalta

The striking point is that both metadata records have nothing more in common than 1945, hardly a distinctive attribute.

c) An integrating piece of information comes from the Thesaurus of Geographic Names [TGN, http://www.getty.edu/research/tools/vocabulary/tgn/index.html], which may be captured by the following metadata:

TGN Id:7012124Names:Yalta (C,V], Jalta (C,V)

Types:inhabited place(C), city (C)

Position:Lat: 44 30 N,Long: 034 10 EHierarchy:Europe (continent) < Ukrayina (nation) < Krym (autonomous republic)Note:Located on S shore of Crimean Peninsula; site of conference between Allied powers in WW II in 1945; is a vacation resort noted for pleasant climate, & coastal & mountain scenery; produces wine, canned fruit & tobacco products.

Source:TGN, Thesaurus of Geographic Names

This could be, at least partly, formatted in DC as well:

Identifier: http://www.getty.edu/research/tools/vocabulary/tgn/7012124

Title: Yalta (C,V]

(Title) Alternative: Jalta (C,V)

Type: inhabited place(C)

Type: city (C)

(Coverage) Spatial: Lat: 44 30 N, Long: 034 10 E [DCMI encoding scheme=Box]

Source: TGN, Thesaurus of Geographic Names

Description: Located on S shore of Crimean Peninsula; site of conference

between Allied powers in WW II in 1945; is a vacation resort noted for

pleasant climate, & coastal & mountain scenery; produces wine, canned fruit &

tobacco products.

(Relation) IsPartOf: Europe (continent); < Ukrayina (nation); < Krym

(autonomous republic)

The keyword Crimea can finally be found under the foreign names for Krym, i.e. via another record (id=1003381). This example demonstrates a fundamental problem: in order to retrieve information related to one specific subject, information from multiple sources, including background knowledge, must be virtually or physically integrated. Integration affects:

- Metadata structure and its intended meaning, such as Creator, Reference.

- The meaning of terminology and related background knowledge, such as Allied Leaders and Allied Powers, The Prime Minister of the United Kingdom and Churchill.

- The use of names and identifiers for concepts and real world items in data fields, such as Yalta, Jalta and TGN7012124.

As stated in section 1, semantic interoperability means the capability of different information systems to communicate information consistent with the intended meaning. Information integration is only one possible result of a successful communication. Other forms are querying, information extraction, information transformation, in particular from legacy systems to new ones. Since the emergence of different human languages, communication could be achieved in two ways: Either everyone is forced to learn and use the same language, or translators are found that know how to interpret sufficiently the information of one participant for another. The first approach is that of proactive standardization, the second that of reactive interpretation. This choice applies to all levels and functions of semantic interoperability and is a major distinctive criterion of various methods.

4.3 Standardization versus Interpretation

Standardization in order to achieve semantic interoperability in a digital library environment may comprise: the form and meaning of metadata and content schemata; shared concepts defined in KOS; use of names and construction of identifiers for concepts and real world items.

Standardization has the following advantages:

- Information can be immediately communicated (transferred, integrated, merged etc.) without transformation.

- Information can be communicated without alteration.

- Information can be kept in a single form.

- Information of candidate sources can be enforced to be functionally complete for an envisaged integrated service.

The disadvantages are:

- Source information needs adaptation to the standard.

- The effort of producing a standard, such as a terminology, can be very high.

- The standard has to foresee all future use. Introducing a new element is time-consuming and may cause upwards-compatibility problems. Necessarily in a changing world, it will always be behind the demands of the current applications.

- A standard is one for its domain. It cannot be optimal for all applications. The necessary selection becomes a political decision.

- Adaptation of information to a standard may require interpretation (manual or automatic).

- Adaptation of information to a standard may result in information loss.

Mechanical interpretation in a digital library environment may comprise: the mapping of metadata and content schemata (sometimes called crosswalks); correlation of concepts defined in KOS (sometimes called cross-concordances); translation of names and reformatting of identifiers for concepts and real world items.

Interpretation has the following advantages:

- Source information, in particular legacy data, needs no adaptation.

- Sources can serve additional local function.

- Only application relevant parts need interpretation.

- Interpretation can be optimised for multiple functions.

- Interpreters can easily be adapted to changes

The disadvantages are:

- Interpretation needs processing time during communication.

- The manual effort of producing the knowledge base for an interpreter, such as correlation tables for terminologies, can be very high (however, there are applications of automatic generation).

- The number of interpreters needed increases drastically with the number of formats.

- Interpretation of information may result in information loss, in particular affecting recall or precision of the overall system.

- Mechanical interpretation may not be possible at all.

The conclusions are that a comprehensive approach to semantic interoperability must consider an optimal combination of both alternatives for all functions:

A standard is elegant and efficient for specific applications. It is appropriate for problems with a low degree of necessary diversity and with high long-term stability. It hinders evolution and fruitful diversity. It reduces information. In order to be applied, it may need interpreters to generate input in standard form. Additional functions may need interpreters. A typical example is the Dublin Core Element Set.

Some of the inflexibility of standards can be avoided by designing extensible or modular standards with core functionalities and community specific extensions that do not invalidate the core functions, such as Dublin Core qualifiers. The CIDOC CRM [ISO21127] is also designed as a core standard. Its extension capability is based on the well-founded specialization (IsA) of object-oriented schemata. The combination of namespace schemas into application profiles [Dekkers 2001, Heery, R., Patel, M.] falls into this category. The idea has not been applied to KOS so far, however, some namespace assignment policies can be seen in this light.

A standard is inevitable when mission critical data have to be communicated, i.e. in cases, where certain data elements are necessary and inexact equivalence of meaning is not acceptable. In that case, the component sources have to commit to a common set of concepts or formats, sometimes called an Interlingua. Such a role is played by e.g. the EBTI and the EET thesaurus of the European Commission, which serve communication about customs regulation and education respectively. Obviously, a European law cannot be enforced on inexact matches between product terms in different European languages.

Interpreters are effective in environments with a high degree of necessary diversity and low long-term stability. They are elegant for cases, where only smaller portions of the source data have to be communicated to a target. Whereas a standard needs to support the sum of all functions in the intended integrated environment, interpreters can be flexible and selective to the needs of smaller subgroups within the integrated environment.

If the numbers of formats in use increases, interpretation may need to go through a common switching language, which reduces the number of interpreters needed, but increases the loss of precision. Effectively, such a switching language is nothing other than another standard. The LIMBER and SCHOLNET Projects took this approach with an English thesaurus in the middle. The CIDOC CRM is designed to be a switching language for schema mappings.

4.4 Levels of Semantic Interoperability in Digital Library Environments

In the current digital library technology, one can clearly distinguish 3 levels of information that are treated in a distinct manner and give rise to distinct methods to address semantic interoperability. These are:

- Data structures, be it metadata, content data, collection management data, service description data.

- Categorical data, i.e. data that refer to universals, such as classification, typologies and general subjects. Theoretically, one can regard all numbers to belong to this category.

- Factual data, i.e. data that refer to particulars, such as people, items, places.

4.4.1 Semantic Interoperability and Data Structures

As outlined above, data structures describe possible states of affairs and support information control and management functions. The control and management functions are normally local to a system and not an object of semantic interoperability. A conceptual model can describe the others. From an ontological point of view, the respective elements of a data structure can be related to universals of the domain, but not to particulars. Characteristically, data structures encode the most relevant relationships in the domain, which should be kept explicit and intact. They provide only very abstract individual concepts, such as resource, agent, date, etc. The information content of data structures is extraordinarily small. It is very stable over time, because it relates directly to vital functions built into the system. Consequently, they are first-class candidates for standardization. Nevertheless, as the example in section 3.2 and the hundreds of metadata standards demonstrate, flexible and interpretative approaches are also necessary as described above.

The interpretative approach is based on schema mapping. It can be divided into the mediation and the data warehouse-style approach. In the first, queries are transformed to fit a source schema, and then only the answer set is transformed into the target format. In the second case, all source data are beforehand transformed into a target format. Which approach is better, depends on the update rate of the sources, their number and the complexity of the schema mapping. Several papers of Diego Calvanese and Lenzerini deal in much detail with these issues [see e.g. Calvanese et al. 1998].

A global schema serving as switching language for multi-step mapping or for information integration must be object-oriented, because it is not possible otherwise to relate the different abstraction levels of the universals of the involved data structures. E.g. one table may be about physical books, another about electronic documents, a third about tourist guides. It is economic and effective to develop the mapping services in a wider domain, an overarching ontology consisting mainly of relationships (such as the CIDOC CRM), as the necessary information is very compact and stable.

Further, abstractions that may be fixed in one schema by a respective table or relation type may be categorical data in another. Therefore, mapping algorithms depend in general on categorical data in the source instances. Frequently, these categorical data are locally standardized in KOS and still high-level concepts. Only if the logical structure of the respective KOS and the conceptualisation behind the involved data structures are compatible, can such mappings be implemented. This problem gives rise to a demand for standardizing or harmonizing the upper levels of KOS with categorical data.

4.4.2 Categorical Data

The number of categorical data is immense. Nations and communities build their own terminologies, from thousands to millions of terms. Terminologies are in constant evolution as new classes of phenomena come up or find the scientific or public interest. From an ontological point of view, terminologies are rich in individual concepts (classes), and very poor in relationships, except for IsA relations. Some researchers assume that all terminologies could be developed into ontologies. However, it is still theoretically not clear, if all human concepts as they appear in our data actually can be reduced to a rigid logical definition [see G. Lakoff, 1987], and if that effort will pay off. Obviously, as high-level concepts are fewer and more fundamental, formal treatment should start top-down.